The architectural paradigm of software development is undergoing a fundamental shift. We are moving from static microservices and manually triggered scripts to autonomous AI agents—entities capable of reasoning, planning, and executing multi-step tasks across disparate systems. While this "agentic" future promises unprecedented productivity, it introduces a systemic risk that current security frameworks are ill-equipped to handle: the explosion of Non-Human Identities (NHIs).

In traditional enterprise security, Identity and Access Management (IAM) has focused primarily on humans. We secure human access via Multi-Factor Authentication (MFA), Single Sign-On (SSO), and biometric verification. However, for every human user in a modern cloud environment, there are now often 10 to 45 non-human identities, including service accounts, secrets, keys, and now, autonomous agents. As these agents gain the ability to provision infrastructure, access sensitive databases, and communicate with third-party APIs, the lack of a robust NHI governance framework becomes a critical vulnerability.

The Crisis of Static Credentials and Over-Privilege

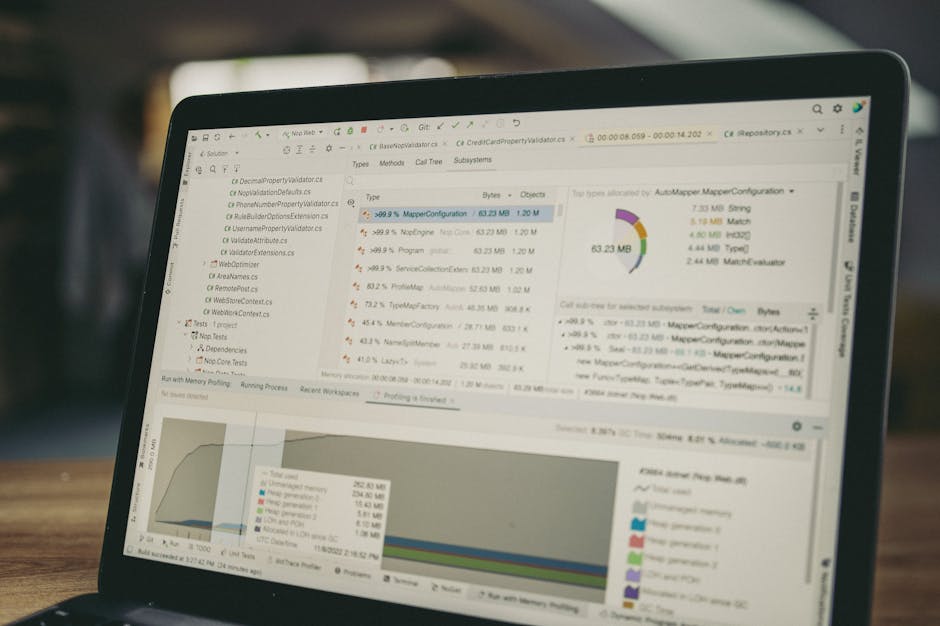

The current state of machine identity is largely rooted in static credentials. Developers frequently use long-lived API keys, OAuth tokens, and service account JSON keys to facilitate communication between services. These secrets are often stored in environment variables, CI/CD pipelines, or configuration files, where they become prime targets for lateral movement.

When an AI agent is introduced into this mix, the risk profile changes. An agent tasked with "optimizing cloud costs" might be granted broad read/write access to a cloud environment. If that agent’s underlying Large Language Model (LLM) is compromised via prompt injection or if its runtime environment is breached, the static credentials it holds provide an attacker with a permanent, high-privilege foothold. Unlike a human user, an agent does not get tired, does not trigger "unusual login time" alerts in the same way, and can execute thousands of API calls per second.

Treating Agent Identity as a First-Class Citizen

To secure the agentic future, the industry must stop treating non-human identities as secondary metadata and start treating them as first-class security citizens. This requires a transition from "Secret Management" to "Identity Governance."

- Machine Identity Issuance and Lifecycle: Every agent must have a unique, verifiable identity rooted in a hardware or software Root of Trust. Instead of sharing a generic "dev-service-account," each instance of an agentic workflow should be issued a distinct identity. This allows for granular auditing, where security teams can trace a specific database query back to a specific agent execution run.

- Workload Identity Federation: Rather than hardcoding secrets, organizations should leverage Workload Identity Federation (such as OIDC-based exchange). For example, an agent running on an autonomous platform should exchange its short-lived platform token for a temporary cloud provider token. This removes the need for persistent secrets entirely.

The Necessity of Short-Lived, Ephemeral Credentials

The most effective way to reduce the blast radius of an agent compromise is to shrink the validity window of its credentials. In a traditional setup, a compromised API key might be valid for years. In a secure agentic framework, credentials should be ephemeral—valid only for the duration of a specific task or "turn" in the agent’s reasoning loop.

Implementing technologies like SPIFFE (Secure Production Identity Framework For Everyone) allows for the automatic rotation and issuance of short-lived X.509 certificates or JWTs. When an agent finishes its task, its identity—and its access—should effectively expire. This "Just-in-Time" (JIT) access model ensures that even if an agent’s token is exfiltrated, its utility to an attacker is measured in minutes or seconds, not months.

Granular Scoping and Capability-Based Security

Beyond the duration of access, we must address the breadth of access. AI agents are often over-privileged because their specific path of action is non-deterministic; developers grant broad permissions "just in case" the agent needs them to solve a problem.

The solution lies in dynamic, granular scoping. Instead of granting an agent the S3:* permission, we should use policy engines (like Open Policy Agent or AWS Cedar) to evaluate requests in real-time. Governance frameworks must move toward "Capability-Based Security," where an agent is granted a specific capability (e.g., "Read only the billing logs for October") rather than a general role.

Furthermore, we must implement "Human-in-the-Loop" (HITL) triggers for high-impact actions. An agent might have the identity to propose a firewall change, but the governance layer should require a cryptographic signature from a human-controlled identity to finalize the execution.

The Path Forward: Observability and Attestation

Securing non-human identities is impossible without comprehensive observability. Standard logs often show that a service account performed an action, but they fail to capture the intent or the chain of thought that led an AI agent to that action. We need "Identity-Aware Traceability," linking LLM traces to specific API calls and credential usage.

Moreover, we must move toward "Attestation." Before an agent is granted a credential, the system should verify the integrity of the agent’s code and its environment. If the agent’s container image has changed or if it is running an unapproved version of a model, its identity should be automatically revoked.

Conclusion

The proliferation of AI agents is outpacing our ability to secure them. Relying on legacy IAM patterns for autonomous entities is a recipe for catastrophic systemic failure. By shifting toward non-human identity governance—characterized by short-lived credentials, workload federation, and granular, intent-based scoping—we can build a foundation where agents can operate autonomously without becoming the ultimate backdoor for attackers. The future of security isn't just about managing humans; it’s about governing the machines that act on our behalf.

Verified Sources:

- "The 2024 State of Non-Human Identity Report" by Silverfort and CyberArk. This research highlights that NHIs outnumber human identities by a factor of 45:1 in modern enterprises and are the leading cause of identity-based breaches.

- "SPIFFE: The Secure Production Identity Framework for Everyone" (CNCF/Linux Foundation). This technical standard provides the blueprint for issuing short-lived, verifiable identities to workloads in dynamic environments.

- "The Rise of Agentic AI and the Identity Gap" by Microsoft Entra Research. Documentation regarding the shift from human-centric IAM to workload-centric security models in the age of autonomous AI.

- "OWASP Top 10 for LLM Applications: LLM07: Insecure System Over-privilege." This security framework explicitly identifies the risks of granting AI agents excessive permissions and the need for granular scoping.

Author: Stacklyn Labs