The primary bottleneck in deploying production-grade Generative AI (GenAI) is rarely the model itself, but rather the quality and structure of the data feeding it. While Large Language Models (LLMs) are adept at processing text, enterprise data remains trapped in "dark" formats unstructured PDFs, handwritten notes, complex images, and audiovisual files. AWS Bedrock Data Automation (BDA) emerges as a specialized, serverless orchestration layer designed to bridge this gap, transforming heterogeneous, unstructured content into machine-readable, structured outputs optimized for downstream AI workflows.

The Architectural Necessity of BDA

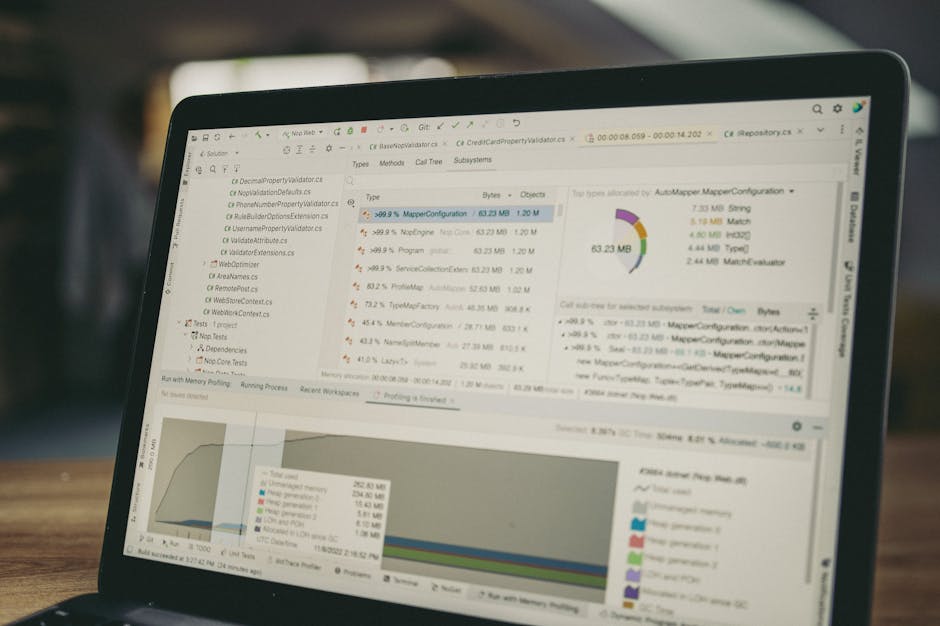

In the traditional AI development lifecycle, developers often resorted to a fragmented stack of Optical Character Recognition (OCR) tools, custom Python scripts for regex parsing, and manual prompt engineering to extract data from documents. This "DIY ETL" approach is fragile, difficult to scale, and lacks the semantic understanding required for complex multimodal data.

AWS Bedrock Data Automation formalizes this process. It functions as an automated pipeline that sits between raw storage (Amazon S3) and the intelligence layer (Amazon Bedrock models). By utilizing specialized foundation models, BDA doesn't just "read" text; it understands the spatial and semantic relationships within a document or image, enabling it to extract structured JSON or Markdown that preserves the original context.

Core Components: Projects and Blueprints

The technical foundation of BDA rests on two primary abstractions: Blueprints and Projects.

1. Blueprints: These are the configuration schemas that define how data should be interpreted and structured.

- Standard Blueprints: These are pre-trained by AWS for common document types (e.g., invoices, receipts, identity documents). They offer "out-of-the-box" extraction with high accuracy for standardized industries.

- Custom Blueprints: For specialized domains such as legal contracts, medical lab reports, or proprietary engineering diagrams developers can define custom schemas. These blueprints allow users to specify exactly which fields need extraction and provide guidance on how the model should handle specific nuances of the data.

2. Projects: A Project is the operational container for BDA. It links a blueprint to an execution environment. Within a project, developers configure the output format (Standard vs. Custom) and manage the lifecycle of the data automation task. The project handles the orchestration of the underlying compute resources, ensuring that as data volumes scale, the extraction throughput remains consistent.

Standard vs. Custom Output Logic

A critical feature of BDA is the flexibility of its output.

- Standard Output provides a comprehensive, high-fidelity representation of the source material. It captures the full content, including tables, headers, and visual elements, typically in a structured Markdown or JSON format. This is ideal for feeding Retrieval-Augmented Generation (RAG) systems where the model needs the entire context to ground its answers.

- Custom Output allows for precision extraction. By providing a JSON schema within the blueprint, developers can force the BDA engine to return only specific data points (e.g., "Total Amount Due" and "Due Date"). This significantly reduces the token overhead for downstream LLM calls and enables direct integration into traditional databases or ERP systems.

The Multimodal Advantage

Unlike legacy OCR engines that treat images and text as separate layers, BDA is inherently multimodal. When processing a complex PDF, BDA analyzes the visual layout, the relationship between labels and values in a table, and even the semantic meaning of embedded images.

For video and audio content, BDA is expected to provide automated tagging and summarization, transforming temporal data into chronological metadata. This allows an AI agent to "query" a video by searching for specific events or spoken keywords, which are indexed via the structured output generated by the BDA pipeline.

Integration into AI Ecosystems

AWS Bedrock Data Automation is not a siloed tool; it is designed to serve as the "pre-processor" for three major AI implementation patterns:

- Optimized RAG (Retrieval-Augmented Generation): High-quality RAG requires precise chunking. By providing structured Markdown, BDA ensures that tables and lists remain intact during the embedding process, preventing the loss of semantic meaning that occurs when text is split arbitrarily.

- Agentic Workflows: AI Agents require structured inputs to call tools and APIs. BDA can take a raw customer email with an attached photo of a damaged product, extract the customer ID and damage details into JSON, and pass that data to an agent capable of initiating a refund.

- Automated Analytics: By converting vast quantities of unstructured historical documents into structured datasets, organizations can perform traditional SQL-based analytics on data that was previously inaccessible to business intelligence tools.

The Developer Workflow

Implementing BDA typically follows a four-step programmatic flow:

- 1. Data Ingestion: Store raw files (PDFs, JPEGs, PNGs) in a designated Amazon S3 bucket.

- 2. Blueprint Configuration: Define the extraction requirements via the AWS Management Console or the Bedrock API. This includes selecting the model specialized for data extraction.

- 3. Project Invocation: Initiate a BDA job, pointing the project to the S3 source. Because BDA is serverless, there is no infrastructure to manage.

- 4. Downstream Consumption: Retrieve the structured output from the destination S3 bucket. The output is ready for indexing in an Amazon OpenSearch Service vector store or for direct ingestion into a Bedrock Knowledge Base.

Conclusion

AWS Bedrock Data Automation represents a shift from "data extraction" to "data intelligence." By automating the transformation of multimodal, unstructured content into structured formats, it removes the highest barrier to AI adoption: the data preparation phase. For senior engineers, BDA provides a scalable, managed alternative to custom extraction pipelines, ensuring that the data entering the AI workflow is as refined and actionable as the models processing it.

Author: Stacklyn Labs